One of the new feature that’s going to accompany in SQL Server 2016. There is a significant improvement in the hardware sector moving into SSD, flash disk etc which increases the cost of hardware. Holding all the historical or archieve data in to this high performing disks is going to cost you more, assuming you got larger volume of data. As a DBA we don’t want to include this historical data into our backup plan to avoid the backup size. We can choose partial backup but again the tradeoff is in the recovery plan.

To overcome these things, Microsoft introduces this new feature. This feature allows us to move the historical data to the cloud without procuring or doing additional maintenance tasks related to the data, by this way we can keep the current transactional data in the inhouse server. One of the good thing with this feature is that there is no need to do any changes to your existing queries, it works seemlessly. When you query against the table it will fetch the current data from inhouse and historical from cloud. Great!!

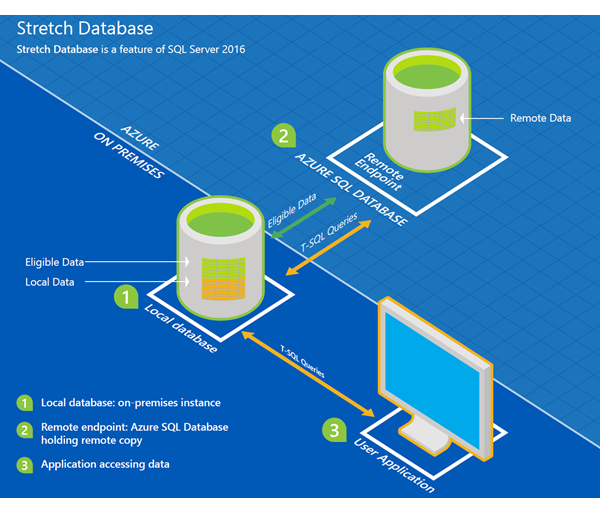

Architecture

Pic Courtesy : Microsoft

How it works

- When you enable a database to use this stretch feature, it will simply create a secured linked server between the in house and the azure.

- During configuration you will be providing azure credentials to which got the subscription. This will create a SQL Database in the azure with S3 performance level and the db version is equal to or greater than V12 (it won’t work if the db version is less than V12). I dont think we can downgrade it but you can upgrade it if you need more processing power.

- Now when you enable table to stretch the data from the in house, it will start moving out the elilgible data (I’m yet to test it to find what’s eligible 🙂 ) from in house to the cloud database.

- Now when you hit a query against this table it will run the query on both in house and cloud based on the data need. From an application point it doesnt know from where it is getting the data.

Advantages

- Seamless stretch to Azure cloud

- No maintenance overhead

- Processing power can be split across inhouse and azure cloud

Current OLTP data will be handled in inhouse server - You can increase the Azure SQL Database performance level for more power

Limitiates

- There are few limitations to enable a table as stretch table. I believe these are valid as we use these things in our OLTP environment so we dont want these tables to go to azure cloud.

- Even if the historical data is going to be used very rarely , you can’t downgrade the Azure SQL database performance level. i.e from S3 to S0 (We are in CTP 3.0 lets see if they change in RTM)

- Depends on network speed. Let’s say when I work with historical data my report or volume of data Im going to work might be be huge so getting it from might take some time.

- Azure cost will be billed monthly and it will be going on where as spending on hardware is one time. It depends on the business how they want to take it.

Leave a Reply